A Preview of Our 50-Step Process for up to 2,500X OpenAI API Cost Reduction

It’s 2023 and AI-powered applications are transforming every industry. Businesses all over the globe are leveraging OpenAI’s APIs to build products that are smarter, more efficient, and more intuitive than ever before.

However, with great power often comes a hefty price tag. Even though AI can unlock massive value for businesses, it can also consume significant resources. We’ve encountered many businesses that put off optimizing costs until they were spending hundreds of thousands of dollars per month on OpenAI’s APIs.

If you’re building your products on top of OpenAI’s APIs, you might be in danger of the same issue. The question then arises, how do you continue harnessing the benefits of AI while keeping costs under control?

That’s where our proprietary 50-step audit process comes in.

Over the years, we’ve gleaned insights and strategies from successful AI implementations. Even though ChatGPT is relatively new, we’ve been working with OpenAI’s APIs since the early GPT-3 days (2020), and many of the same principles apply. We’ve distilled these into a comprehensive guide that can help you drastically reduce your OpenAI costs - sometimes by up to 2,500X!

Now, we want to share a sneak peek into this process.

“Cost savings is great, but I want to focus on performance first”

True. The priority for starting out with any AI product is making sure the performance is adequate.

But, here’s two important truths:

There are tons of low-hanging fruit areas to reduce OpenAI costs. Some will have minimal hits to performance. Many will result cost and performance.

4 Example steps from the 50-step cost-optimization audit

The 50-step audit is pretty comprehensive, and would likely decrease the load times for this website if we posted the full version here. That said, here are 4 non-consecutive snippets from our cost-optimization strategy guide:

Audit Step #13: Saving 45-80% by asking the OpenAI model for brevity

If you’re using OpenAI completion or chatbot endpoints, you need to remember that you pay by the token. As a consequence, asking the OpenAI LLM for brevity can save tons of money.

For example, adding “be concise” can shorten the prompt outputs.

Another example: adding GPT-4 for 5 examples instead of 10 examples

Audit Step #23: ~50x savings by switching from GPT-4 to GPT-3.5 Turbo

GPT-4 is great at generating high-quality responses to a complex requests. If you’re doing something complex like evaluating another LLM, GPT-4 is great for that.

But, chances GPT-3.5-Turbo can do as good of a job for many of the tasks you’re asking of GPT-4. This is good, because it’s roughly 50 times cheaper to use GPT-3.5-Turbo than GPT-4.

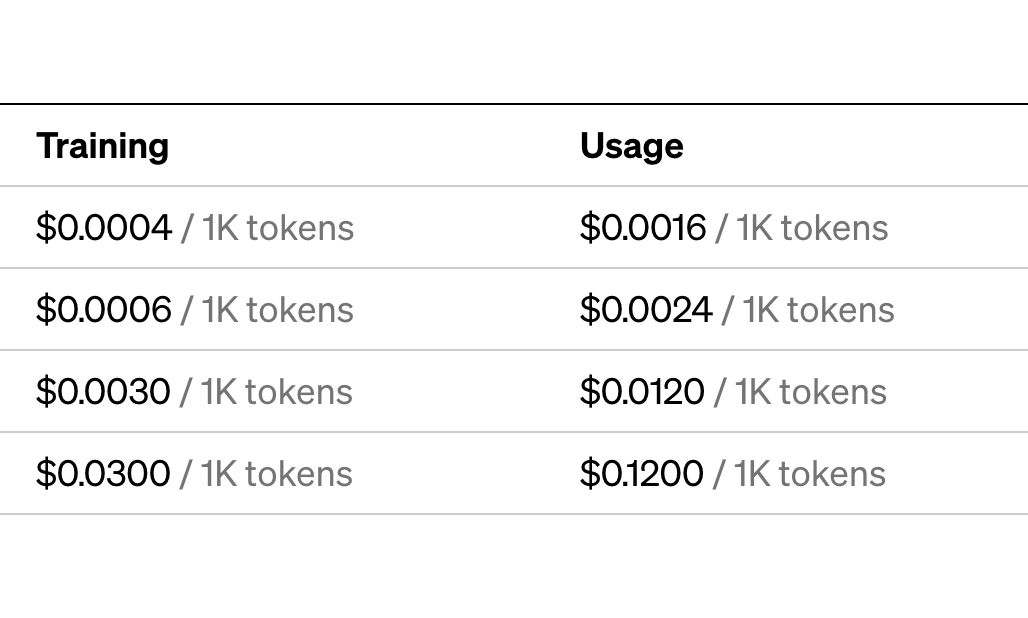

| GPT-4-32k | GPT-4-8k | GPT-3.5 Turbo | |

|---|---|---|---|

| Usage | 50 pages of document prompts | Smaller version, still powerful | Faster original ChatGPT |

| Prompt Price/1K tokens | $0.060 | $0.060 | $0.002 |

| Generation Price/1K tokens | $0.120 | $0.060 | $0.002 |

Don’t immediately jump to GPT-4 for tasks like summarization. You should see how far GPT-3.5-Turbo can go. It’s definitely more than enough for most document summarization tasks.

Strategy Step #27: Saving another ~5x by switching from GPT-3.5-Turbo to OpenAI embeddings

GPT-3.5-Turbo is cheap, but it’s not as cheap as using OpenAI embeddings. Embeddings let you store existing data in a vector store that you can look up later. Looking up something in a vector store is much cheaper than asking for an LLM generation. For many applications, a vector store is more predictable and has the same quality.

For example, if you store embeddings for Wikipedia in a vector store, you can ask it questions. Asking a question like “What is the biggest city in Wyoming” to a vector store retrieval system is ~5x cheaper than asking GPT-3.5-Turbo. Compared to GPT-4, this is 250x cheaper.

Important: Vector lookup isn’t free. It uses CPU inference, and at scale one needs to more mindful of the costs. That said, vector lookup is so cheap and fast compared to LLM generations that might as well be free for the purposes of this comparison.

Strategy Step #37: Saving another ~10x by switching from OpenAI embeddings to self-hosted embeddings

OpenAI isn’t the only embeddings provider. Long before OpenAI, there were plenty of ways of making your own. Those ways are still around.

One modern non-OpenAI embeddings tool (with similar quality) is Hugging Face’s SentenceTransformers.

from sentence_transformers import SentenceTransformer

model = SentenceTransformer(‘paraphrase-MiniLM-L6-v2’)

#Sentences we want to encode. Example:

sentence = [‘Ideally we would pass in sentences from a parquet file here and embed them in batches’]

#Sentences are encoded by calling model.encode()

embedding = model.encode(sentence)

A typical AWS g4dn.4xlarge costs around $1.204/hour for a spot instance.

This instance type has one 16GB NVIDIA T4 GPU. On a GPU like this SentenceTransformers can embed 9000 tokens (or ~7000 words) per second.

This amounts to about $0.0003 per 9000 tokens.

Before OpenAI reduced their prices, this was ~10x cheaper than the Ada embeddings. Even after the Ada v2 price reductions, this is still ~3x cheaper than OpenAI.

It’s worth noting these approximate calculations are sensitive to load and embedding batch size. It’s not hard to see that self-hosting embeddings is cheaper. And this is before you start to think about things like ingress and egress fees.

In Summary

AI optimization is not just about performance but also about making the most of your budget without compromising on quality. Whether it’s by simply requesting for brevity from the AI model or utilizing more cost-effective alternatives such as GPT-3.5-Turbo and OpenAI embeddings, there are many ways to drastically reduce costs, sometimes by up to 2,500 times!

However, it’s not always straightforward to determine which cost-saving techniques are the best fit for your particular AI application, and that’s where our expertise comes in. We’ve just shared with you four out of our 50-step cost-optimization audit that have proven to yield substantial savings for our clients.

NOTE: This is a small sample of work done for multiple clients. Results may vary according to individual company circumstances and use-cases.

Let’s Get Started

Are you interested in discovering the other 46 steps? Do you want to learn how we can tailor these strategies to meet your company’s specific needs? Remember, optimizing your AI applications for cost does not mean you have to sacrifice performance. With the right strategies, you can achieve both.

Click the links below to book a call with us. Let’s explore together how we can maximize your AI budget while delivering top-tier performance for your applications.

Click the links below to schedule a consultation with us.